Tutorials/QAQC

Geodata spatial data quality assessment and correction process for RBIS data

Introduction

Spatial data is a powerful form of information, capable of providing information of great interest and tremendous use to the user. However, much like other data representing the ‘real world’, precision and accuracy must be high for the results of data analysis to be deemed reliable and thus applied to real world projects and undertakings.

The quality of spatial data, and how it should be assessed, is a topic that has been covered since the arrival of GIS and spatial analysis into the world of academia and possible real-world applications. Each set of data is represents a specific area, a specific subject matter, and possibly too, a specific time point. Further to this, each dataset has very likely come to exist as the result of multiple data producers and data production techniques, each of which has its own specific set of parameters and working environments, and thus too, possible areas from which error might arise. Such a combination of factors that are required for GIS data to exist means that there is scope for a large number of possible errors to arise within the data. As far as possible, the quality control of such data should be assessed, to avoid recreation of the errors in future analysis using the datasets in question. At best, such a control process would take place at the initial point of creation of a dataset. However, it is often the case that datasets are created, gathered, and shared, by multiple users at multiple locations, leading to the immediate duplication of datasets, and the possibility for error to replicate at a tremendous rate and across a very large scale, meaning that if QA/QC was not undertaken on the very first dataset version, many datasets containing errors or flaws will profligate throughout the user community, often without the users knowing the quality of the data they are working with. As such, a mid-level (in terms of data usage workflow) QA/QC process should be adopted by any users of spatial data, to stop the spread of error as soon as possible.

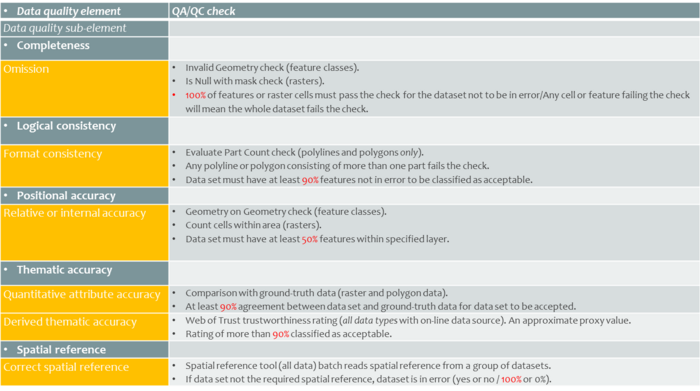

To allow such a process to be applied by many users across many fronts, the adoption of QA/QC standards, in this case ISO QA/QC standards, is also recommended. The advantage of such standards is that, alongside having being developed with the express purpose of correcting and maintaining a high quality of spatial data, by a set of professionals in the topic area (spatial data quality), is that such standards and the processes used to apply them can be used by across the world, if adopted by sufficient users, providing a set of standardized data quality measures, as well as, subsequently, a set of standardized, sufficiently and accurately corrected, final datasets that can be reliably worked with by the user community.

Here, a process developed as an MSc thesis, is presented in guideline form. This process adopts ISO QA/QC standards and processing workflow, applied using ArcGIS and ArcGIS Data Reviewer software, and is set up as a step-by-step guide on how to correct spatial data to be used in the context of RBIS. This workflow and the steps presented here, are, however, not fixed nor final, as different data types or data themes, currently not present in RBIS, may require a different set up QA/QC measures and, thus too, different QA/QC measurement steps. In the event of such additional or alternative steps being required, it is highly probable that this guideline will be extended, and provided as a newer version, via the FSU Geoinformatics department data services.

1. Overview